A new study finds that 26 to 19 thousand years ago, with CO2 concentrations as low as 180 ppm, fire activity was an order of magnitude more prevalent than today near the southern tip of Africa – mostly because summer temperatures were 3-4°C warmer.

We usually assume the last glacial maximum – the peak of the last ice age – was signficantly colder than it is today.

But evidence has been uncovered that wild horses fed on exposed grass year-round in the Arctic, Alaska’s North Slope, about 20,000 to 17,000 years ago, when CO2 concentrations were at their lowest and yet “summer temperatures were higher here than they are today” (Kuzmina et al., 2019). Horses had a “substantial dietary volume” of dried grasses year-round, even in winter at this time, but the Arctic is presently “no place for horses” because there is too little for them to eat, and the food there is to eat is “deeply buried by snow” (Guthrie and Stoker, 1990).

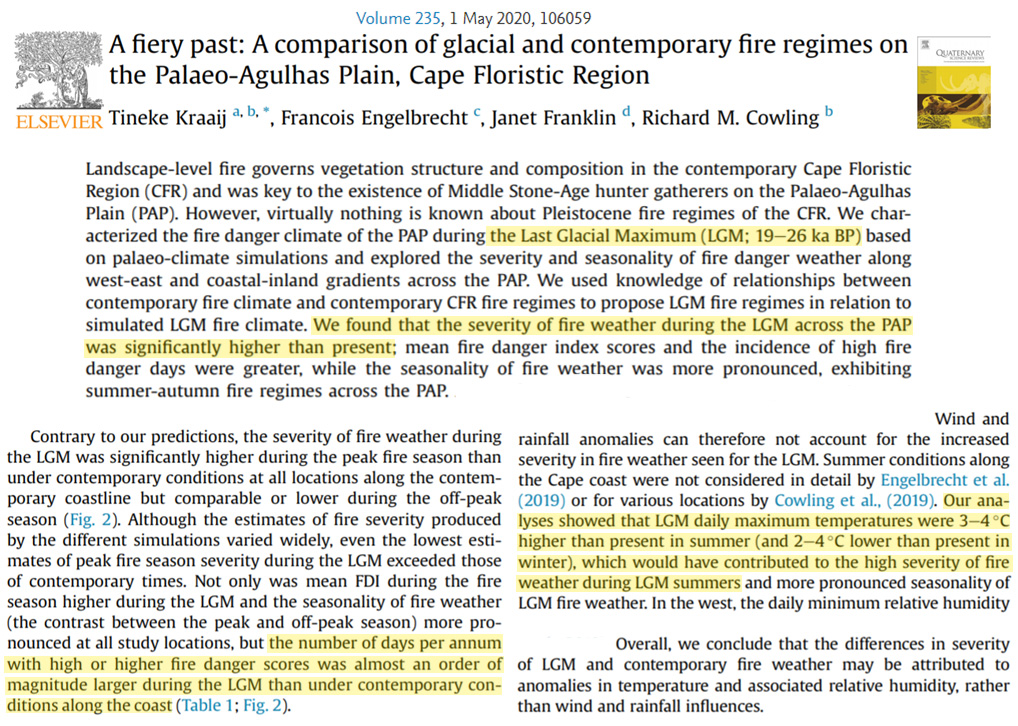

In a new study (Kraaij et al., 2020) find evidence that “the number of days per annum with high or higher fire danger scores was almost an order of magnitude larger during the LGM [last glacial maximum, 19-26 ka BP] than under contemporary conditions” near Africa’s southernmost tip, and that “daily maximum temperatures were 3-4°C higher than present in summer (and 2-4°C lower than present in winter), which would have contributed to the high severity of fire weather during LGM summers.”

Neither conclusion – that surface temperatures would be warmer or that fires would be more common – would seem to be consistent with the position that CO2 variations drive climate or heavily contribute to fire patterns.

[…] Read more at No Tricks Zone […]

[…] Read more at No Tricks Zone […]

[…] Read more at No Tricks Zone […]

[…] K. Richard, August 3, 2020 in […]

Thank you Kenneth and Pierre. These are extremely interesting studies.

Yes, CO2 is irrelevant. Unsurprisingly, climate is driven by our star, the Sun. Here are 3 proofs, less than 3 minutes each …

https://www.researchgate.net/publication/343290627

https://www.researchgate.net/publication/341622566

https://www.researchgate.net/publication/338914556

I challenge anyone to read any of these and say they still believe CO2 is a ‘pollutant’.

[…] Read more at No Tricks Zone […]