Browse: Home / CO2 and GHG

By P Gosselin on 24. July 2024

History of the AGW narrative of the IPCC Kyoji Kimoto, kyoji@mirane.co.jp Independent climate researcher Manabe’s model studies debunked by Newell (1979) The anthropogenic global warming (AGW) scare was created in part by Japanese scientist Syukuro Manabe using a one dimensional radiative-convective model (1DRCM) having no ocean (1964/1967). He obtained a no-feedback climate sensitivity of 1.3°C for […]

Posted in Climate Sensitivity, CO2 and GHG |

By Kenneth Richard on 12. July 2024

A new study comprehensively eviscerates a 57-year-old modeling paper upon which nearly the entirety of the IPCC’s CO2-drives-climate paradigm is based. Dr. Roy Clark has published a new 73-page study that rips apart the Manabe and Wetherald (1967) paper (MW67) that effectively hatched the IPCC-popularized concepts of CO2 climate sensitivity, radiative forcing, and positive/negative feedbacks […]

Posted in Climate Sensitivity, CO2 and GHG |

By P Gosselin on 19. June 2024

490,000 tonnes of CO2 It’s the European Cup soccer championships and fans across the continent and beyond are celebrating the event. But there are some party-poopers out there who worry about the impact on the climate. By Klimanachrichten Soccer fans should have a guilty conscience. The mdr public broadcasting here calculates what environmental idiots the […]

Posted in Alarmism, CO2 and GHG |

By Kenneth Richard on 4. June 2024

In the 1970s and 1980s ExxonMobil did not know that their reports would be so wrongly misinterpreted in the 2010s. Since 2015, when “investigative journalists” uncovered reports written in the late 1970s by ExxonMobil’s Science Advisor J.P. Black, it has been a common talking point in alarmist circles to insist that “Exxon Knew” about the […]

Posted in CO2 and GHG, Models, Uncertainty Error |

By P Gosselin on 1. June 2024

Better air temporarily warms the atmosphere Image: NASA (pubic domain) By Klimanachrichten An interesting article in Spektrum about a development that we have already reported on here. Apparently there is a connection between cleaner fuels for ships and cloud formation, which means more sunshine and higher temperatures. However, the reduced content of atmospheric sulphate aerosols […]

Posted in CO2 and GHG, Pollution |

By P Gosselin on 22. May 2024

With comparatively stable CO2 levels over 10,500 years, temperatures still fluctuated within a range of -4 to +3 °C. Yet, German Constitutional High Court preposterously claims there is an “almost linear relationship” between CO2 and temperature. Junk mandated to “science” by law? Alpine glaciers: spoilsports for the CO2 climate hypothesis The CO2 introduced into the […]

Posted in Climate Politics, CO2 and GHG, Glaciers, Green Follies |

By Kenneth Richard on 13. May 2024

Disturbing resting seafloor CO2 is yet another way humans are believed to be heating up the planet. Only 1% of the seafloor has been molested by dragging nets through the sand (trawling) in an effort to retrieve the seafood staples we enjoy. But that’s enough to wreak havoc on the Earth’s climate at the top […]

Posted in CO2 and GHG, Green Follies, Ocean Acidification, Oceans |

By Kenneth Richard on 2. May 2024

“CO2 is only present in the atmosphere in trace amounts (0.04%) and lacks sufficient enthalpy to have any measurable effect on the atmosphere’s temperature.” – Nelson and Nelson, 2024 New research (Nelson and Nelson, 2024) further documents the inconsequential role that CO2 plays in climate. Less than 4% of longwave infrared radiation is absorbed by […]

Posted in Climate Sensitivity, CO2 and GHG, Paleo-climatology |

By Kenneth Richard on 23. April 2024

Adding CO2 to the atmosphere can have no significant climatic effect when rising above the threshold of about 300 ppm. Due to saturation, higher and higher concentrations do not lead to any further absorption of radiation. If one were to paint a white surface black so as to allow it to absorb as much heat […]

Posted in Climate Sensitivity, CO2 and GHG |

By Kenneth Richard on 8. April 2024

Reconstructions of paleo CO2 levels openly rely on data derived from plant stomata. But when modern (1800s-present) CO2 measurements from stomata conflict with the narrative that humans drive CO2 levels, they are patently rejected. Scientists readily acknowledge plant stomata evidence from one location are “widely used as an effective tool for paleoenvironmental reconstructions” of global […]

Posted in CO2 and GHG, Paleo-climatology |

By Kenneth Richard on 18. March 2024

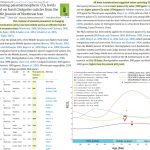

“From modern instrumental carbon isotopic data of the last 40 years, no signs of human (fossil fuel) CO2 emissions can be discerned.” – Koutsoyiannis, 2024 It is routinely claimed that a telltale sign human emissions (fossil fuels) have irrevocably altered the atmospheric CO2 concentration is a declining trend in carbon isotope 13 (δ13C), considered an […]

Posted in CO2 and GHG, Emissions |

By Kenneth Richard on 8. February 2024

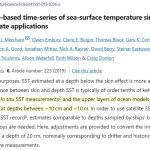

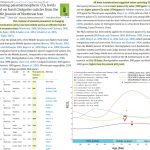

The shallowest sea surface temperature measurement limit is 10,000 times deeper than the extent of CO2’s radiative influence. When sea surface temperatures (SSTs) are measured, the depth range of the measurement typically extends from 10 cm to 10 m, or 100 mm to 10,000 mm (Merchant et al., 2019). Image Source: Merchant et al., 2019 […]

Posted in Climate Sensitivity, CO2 and GHG, Oceans |

Recent Comments