Two German scientists describe what many western governments have been basing their energy and environmental policies on. It’s not pretty. What follows is an excellent review of climate modeling so far.

=======================================

Fun with Climate Models: Flops, Failures and Fumbles

By Dr. Sebastian Lüning and Prof. Fritz Vahrenholt

(Translated, edited by P Gosselin)

What’s great about science is that one can think up really neat models and see creativity come alive. And because there are many scientists, and not only just one, there are lots of alternative models. And things only get bad when the day of reckoning arrives, i.e. when the work gets graded. This is when the prognoses are compared to the real, observed measurements. So who was on the right path, and who needs go back to the drawing board?

When models turn out to be completely off, then they are said to have been falsified and thus are considered to have no value. The validation of models is one of the fundamental principles of science, Richard Feynman once said in a legendary lecture:

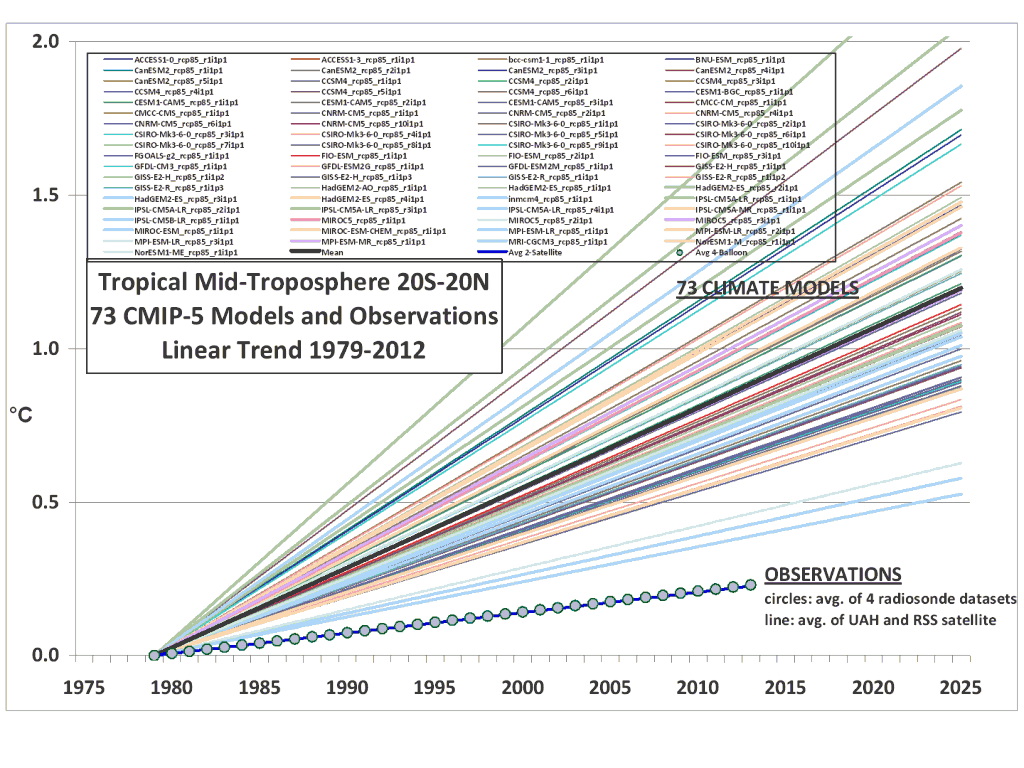

Failed hypotheses have been seen very often in science. A nice collection of the largest scientific flops is presented at WUWT. Unfortunately the climate sciences also belong to this category. Roy Spencer once compared an entire assortment of 73 climate models to the real observed temperature development, and they all ended up overshooting the target by far:

And already yet another model failure has appeared: In August 2009 Judith Lean and David Rind made a daring mid-term climate prognosis in the Geophysical Research Letters. They predicted a warming of 0.15° for the five-year period of 2009 to 2014. In truth it did not warm at all during the period. A bitter setback.

Over the last years it has started to dawn on scientists that perhaps something was missing in their models. The false prognoses stand out like a sore thumb. Not a single one of the once highly praised models saw the current 16-year stop in warming as possible. In September 2011 in an article in the Journal of Geophysical Research Crook & Forster admitted that the superficial reproduction of the real temperature development in a climate model hardly meant the mechanisms were completely understood. The freely adjustable parameters are just too multifaceted, and as a rule they are selected in a way to fabricate agreement. And just because there is an agreement, it does not mean predictive power can be automatically derived. What follows is an excerpt from the abstract by Crook & Foster (2011):

In this paper, we breakdown the temperature response of coupled ocean‐atmosphere climate models into components due to radiative forcing, climate feedback, and heat storage and transport to understand how well climate models reproduce the observed 20th century temperature record. Despite large differences between models’ feedback strength, they generally reproduce the temperature response well but for different reasons in each model.”

In a member journal of the American Geophysical Union (AGU), Eos, Colin Schultz took a look at the article and did not mince any words:

Climate model’s historical accuracy no guarantee of future success

To validate and rank the abilities of complex general circulation models (GCMs), emphasis has been placed on ensuring that they accurately reproduce the global climate of the past century. But because multiple paths can be taken to produce a given result, a model may get the right result but for the wrong reasons.”

Sobriety in the meantime has also spread over to IPCC-friendly blogs. On April 15, 2013, in a guest post at Real Climate Geert Jan van Oldenborgh, Francisco Doblas-Reyes, Sybren Drijfhout and Ed Hawkins made it clear that the models used in the 5th IPCC report were completely inadequate for regional climate prognoses:

To conclude, climate models can and have been verified against observations in a property that is most important for many users: the regional trends. This verification shows that many large-scale features of climate change are being simulated correctly, but smaller-scale observed trends are in the tails of the ensemble more often than predicted by chance fluctuations. The CMIP5 multi-model ensemble can therefore not be used as a probability forecast for future climate. We have to present the useful climate information in climate model ensembles in other ways until these problems have been resolved.”

Also Christensen and Boberg (2012) were critical about the AR5 models in a paper appearing in the Geophysical Research Letters. The scientists presented their main results:

– GCMs suffer from temperature-dependent biases

– This leads to an overestimation of projections of regional temperatures

– We estimate that 10-20% of projected warming is due to model deficiencies”

In January 2013 in the Journal of Climate Matthew Newman reported in an article “An Empirical Benchmark for Decadal Forecasts of Global Surface Temperature Anomalies” on the notable limitations of the models:

These results suggest that current coupled model decadal forecasts may not yet have much skill beyond that captured by multivariate red noise.”

In the prognosis time-frame of multiple decades, they do not perform better than noise. An embarrassment.

Also Frankignoul et al. 2013 expressed serious concerns in the Journal of Climate because of the unimpressive performance of the climate models. They graded the models plainly as “unrealistic” because they did not implement the role of ocean cycles correctly.

In July 2013 Ault et al. looked at a paper in the Geophysical Research Letters and at the models for the tropical Pacific region. They made an awful discovery: Not one of the current models is able to reproduce the climate history of the region during the past 850 years. Excerpts from the abstract:

[…] time series of the model and the reconstruction do not agree with each other. […] These findings imply that the response of the tropical Pacific to future forcings may be even more uncertain than portrayed by state-of-the-art models because there are potentially important sources of century-scale variability that these models do not simulate.”

Also Lienert et al. (2011) found problems with the North Pacific. And in July 2014 in an article in Environmetrics, McKitrick & Vogelsang documented a significant overestimation of the warming in the climate models for the tropical region over the past 60 years.

In March 2014 Steinhaeuser & Tsonis reported in Climate Dynamics on a comparison of 23 different climate models and the extent to which they were able to reproduce temperature, air pressure and precipitation over the 19th and 20th centuries. The surprise was great when the scientists found that the model results deviated widely from each other and were unable to give a correct account of reality. A more detailed discussion is available at The Hockey Schtick.

In a press release from September 17, 2012, scientists of the University of Arizona complained that as a rule climate models failed when looking at periods of three decades and less. Also attempts at prognoses for regional levels were unsuccessful:

UA Climate Scientists put predictions to the test

A new study has found that climate-prediction models are good at predicting long-term climate patterns on a global scale but lose their edge when applied to time frames shorter than three decades and on sub-continental scales.”

In October 2012 Klaus-Eckart Puls at EIKE warned that up to now the temperature prognoses of the climate models have been false for every atmospheric layer:

For some decades now climate models have been projecting trends (“scenarios”) for temperature for different layers of the atmosphere: near surface layer, troposphere, and stratosphere. From the near surface layer all the way to the upper troposphere it was supposed to get warmer according to the AGW hypothesis, and colder in the stratosphere. However meteorological measurements taken from all atmospheric layers show the exact opposite!”

So what is wrong with the models?

For one they still have not found a way to implement the empricially confirmed systematic impact of the ocean cycles into the models. Another problem of course is that the sun is missing in the models as its important impact on climate development continues to be denied. It’s still going to take some time before the sun finally gets a role in the models. But there are growing calls for the taking the sun into account and recognition that something is awry. In August 2014 in the Journal of Atmospheric Sciences a paper by Timothy Cronin appeared. It criticized the treatment of solar irradiance in the models. See more on this at The Hockey Schtick.

The poor prognosis-capability of climate models is giving more and more political leaders cause for concern. Maybe they should not have relied on the model results and developed far-reaching plans to change society. To some extent they have already began to implement these plans. Suddenly the very credibility of the climate protection measures finds itself at stake.

The best would be a moratorium on models. Something needs to be done. It is becoming increasingly clear that the present wild modeling simply cannot continue. It’s time to re-evaluate. The climate models so far are hardly distinguishable from computer games on climate change where one sits comfortably on the couch and shoots as many CO2 molecules out of the atmosphere as he can and then reaps the reward of a free private jet flight with climate activist Leonardo di Caprio.

===================================

Fritz Vahrenholt and Sebasian Lüning authored the climate science book The Forgotten Sun. In this book they examined the poor quality of climate models and why they will always fail.

It is a delusion that the present climate models predict anything. This has been confirmed by 100% of the models. But they soldier on as if they have proved something. What have they proved? What was that definition of insanity? Oh, yes: Trying the same thing over and over again, expecting a different result. In this case, a successful result. If they had put the same effort into observing the world accurately and attempting to puzzle out all the various linkages, instead of continual scaremongering, and trying to prove the scare, we might of made some progress by now. Instead, we must examine thousands of so called peer-reviewed papers and cull the ones that pre-suppose doom and gloom. It will take the next hundred years to undo the damage of the climate delusion.

One real problem I see is that models are useful in many other fields as well, but now there is the risk that their reputation will be so damaged by the climate modeling scandal that it even seriously risks setting back science as a whole. Unfortunately a few bad, rotten apples risk spoiling the entire barrel.

No. The implosion of an entire scientific field will serve as a warning to the survivors to STOP CHEATING.

Which is an improvement.

So… the climate modelers; did they really not know about the whimsical nature of their enterprise when they started? Wasn’t the entire enterprise by flim flam man #1, Steve Schneider himself, who seamlessly switched from an ice age alarmist to a warming alarmist when it became expedient?

Wasn’t a science from the start. Scientific rules don’t apply. RICO applies.

Sorry I don’t agree with a moratorium, what is needed is competent modelling.

Recent findings by groups like Meehl et al and the UK Met Office show that including the natural 60 year ocean cycles improves general circulation model (GCM) performance in modelling the temperature record (ie the “pause” or “hiatus”).

What is needed is for the Svensmark mechanism to be included into a GCM along with the ocean cycles. If someone does that I suspect their modelling skill would be very good.

Unfortunately it would then show equilibrium climate sensitivity is even lower than Curry and Lewis have just reported. That would kill the global warming scare dead…and also kill their own model funding.

We should look at the level of funding though.

The supercomputers the climate modelers use are pretty expensive. Yet the skill of the models has not improved with increased computational power.

As computational cost continues to half once in 18 months, I suggest therefore to half the funding for climate modeling once in 18 months. This way, the climate modelers can continue to use a constant amount of computational power while they improve their programs.

Present hard-believer position keep the matter fossilized into a take-or-be-denier tit for tat struggle: Mankind looses time.

The only way to go ahead without pre-frozen ideas, would be alarmist to confess they had superfically prepared their “models” and twisted parameters in order to yield anti-industry predictions (a fetish leftist propaganda).

But for these guys, admitting this is worse than commit self suicide…

Only politicians could react IF media stop inflate their stupid bubble. They even don’t understand yet that reversing steam would provide them dozens of years materials to issue attractive papers concerning the AGW Witch Hunt….

Off topic message, another country recognising the problems with Wind Power, this time it is Canada.

http://tallbloke.wordpress.com/2014/10/01/sun-news-down-wind-sends-wind-industry-into-tail-spin/

The modellers have been caught in their own web. I think the models were never intended to do the climate, but only to investigate various processes and to do short time weather.

Somehow they got hijacked to do global climate, and the money and fame was more than the modellers could withstand.

It wont do to add more variables/processes, it will only add to the chaos and you will loose any insight in the climate and the dependencies.

The modelers were always about the grand global catastrophes.

A very early global model was the Nuclear Winter model. (Which, ahem, negatively blows.)

http://www.textfiles.com/survival/nkwrmelt.txt

All futile diversion from reality. There certainly will be a wide review of all things ‘Energy’ necessary, I think, if my ‘light at the end of the tunnel’ find is how to create new ways forward:

http://cleanenergypundit.blogspot.co.uk/2014/10/tygerfound-whereas-1.html